Top Trends & Topics

Digital Marketing

Everything You Need to Know to Get Started with Google Analytics 4

- October 10, 2022

- 3 min.

TechArk News

View All TechArk News

View

The Latest Trends & Topics

SEO, Web Hosting

The Need for Speed: How Quality Hosting Can Improve Core Web Vitals

SEO, Web Hosting

The Need for Speed: How Quality Hosting Can Improve Core Web Vitals

- March 29, 2024

- 4 min.

Web Design, WordPress

Should I Pick a Theme or Custom Design for my WordPress Website?

Web Design, WordPress

Should I Pick a Theme or Custom Design for my WordPress Website?

- March 21, 2024

- 3 min.

Website Maintenance

Prevent & Manage Website Disruptions: Tips for Business Success

Website Maintenance

Prevent & Manage Website Disruptions: Tips for Business Success

- November 6, 2023

- 3 min.

Website Design + Development

Designed to Scale: How WordPress Powers Your Business Growth

Website Design + Development

Designed to Scale: How WordPress Powers Your Business Growth

- October 31, 2023

- 4 min.

Google Ads

Empowering Nonprofits with Google Ad Grants: Amplify Your Impact Online

Google Ads

Empowering Nonprofits with Google Ad Grants: Amplify Your Impact Online

- October 5, 2023

- 3 min.

Software Development

Stop with the Spreadsheets! How to automate your business workflows

Software Development

Stop with the Spreadsheets! How to automate your business workflows

- October 4, 2023

- 4 min.

Company News

TechArk Solutions Named an Inc. 5000 Fastest-Growing Private Company For Third Year

Company News

TechArk Solutions Named an Inc. 5000 Fastest-Growing Private Company For Third Year

- August 16, 2023

- 2 min.

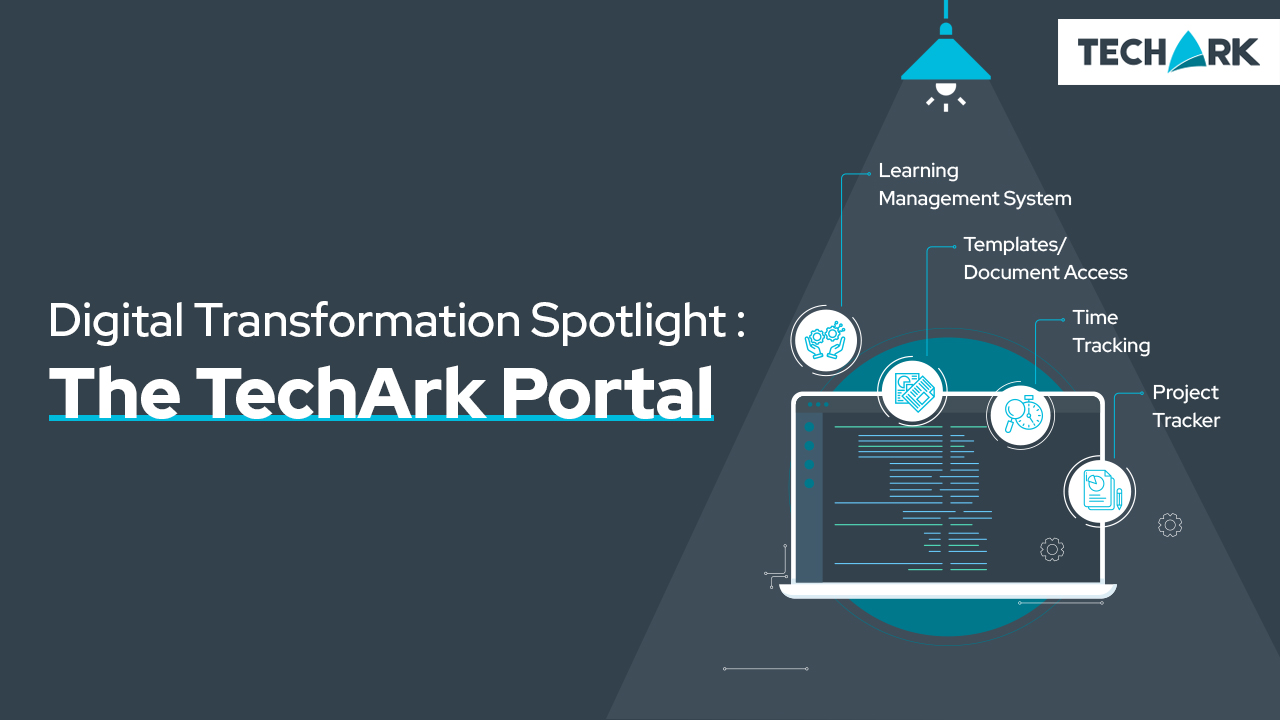

Digital Transformation

Why Automation is at the Core of Digital Transformation

Digital Transformation

Why Automation is at the Core of Digital Transformation

- April 25, 2023

- 4 min.